Correlation Is Not Causation. Your AI Doesn’t Know the Difference.

Early in my career, I was a caseworker for people experiencing homelessness in Indianapolis, Indiana. I wouldn’t have used the word at the time, and I doubt any of my colleagues would have either, but causality mattered to me every single day.

I wanted to know if what I was doing worked. Not in general. Not on average. For the person sitting in front of me. And that person was never average. They were in a specific situation, with a specific history, and whatever I did had to be tailored to them.

Were they a family, newly without housing, still together, living in their car? Or had they been on the streets for most of their lives, navigating addiction and mental health challenges that had compounded over the years? The answer to that question changed everything about what I should do next.

Evaluation reports that told me a program worked on average didn’t help me. I needed to understand this person: to listen, to pick up on what their story was telling me about their situation, their condition, their context. I needed to know, for people like this, what I could do to make a real, meaningful difference.

Those were causal questions. I just didn’t have that word for them yet.

What I also didn’t have, and what most practitioners still don’t have, is the ability to trust the evidence beneath any recommendation. And trust is the centerpiece of this. If a predictive algorithm gives me a recommendation based on correlations that may or may not reflect the true cause of anything, I can’t fully trust it. If I act on it and my client comes back worse off, I wouldn’t necessarily know why. The algorithm wouldn’t tell me. I’d just feel it in the results I was capable of getting. And the client would feel it most of all.

Here is the harder truth: as a frontline practitioner, I would not have been able to tell the difference between a predictive and a causal recommendation. But the difference would show up. An algorithm drawing correlations between a client’s mental health history, their criminal record, maybe even their race or ethnicity, could have shaped what I offered them, what referrals I made, what services I connected them to, without my ever knowing that what I was acting on was correlation dressed as cause. I would have been perpetuating baked-in biases and harm without realizing it. And I would have cared deeply if I had found out.

This is happening right now, at scale, across the social sector.

The AI Gold Rush and What It’s Missing

The social and education sectors are in the middle of an AI gold rush. Large language models are being woven into case management platforms. Ed Tech abounds. AI-powered risk-scoring tools are shaping decisions about who receives services and at what intensity. Algorithmic outcome prediction is appearing in funder reports as if it were evidence. Every week, a new vendor promises that its AI can tell you what is working, who is at risk, and where to direct your resources. And this wave is cresting at a precarious moment for the sector. Federal evidence infrastructure is being dismantled. Philanthropy is pulling back rather than stepping in.

Which means the promise AI is making to fill that gap — that it can tell you what is working, who is at risk, where to direct your resources — matters more than ever. And it is, in most cases, unfulfilled. Not because the technology is immature, but because the question it is designed to answer is the wrong one. AI systems built on machine learning and large language models are extraordinarily good at finding patterns in historical data. They are not designed to tell you what caused an outcome. And in a sector whose entire purpose is to change people’s outcomes, causation is the only question that ultimately matters.

Judea Pearl, the Turing Award-winning researcher whose work defined modern causal inference, put it plainly:

Project Evident is committed to saying the hard thing when the stakes are this high. But this is not a rejection of AI. It is an insistence that AI’s transformative potential for evidence generation can only be fulfilled if the field demands one foundational condition: that the tools we build, fund, and trust are grounded in causality.

What Practitioners and Funders Actually Need

The practitioner’s motivating question is always causal: Is what I’m doing actually making a difference for this person, in this situation?

Standard AI cannot answer that question. An AI-powered dashboard can tell a program director that youth who attended more sessions had better results. It cannot tell her whether the sessions caused the improvement, or whether those youth were already more engaged and more likely to succeed regardless. It sees the pattern. It cannot see through it.

AI’s promise in the social and education sector — better outcomes, achieved more efficiently, at greater scale — can only be kept if the underlying evidence is trustworthy. AI that produces faster correlations is not the same as AI that produces better, cheaper, faster outcomes. For practitioners making real-time decisions about real people, that distinction is everything. For funders, the stakes are equally high. When AI-generated analysis drives investment decisions and that analysis is correlational, funders risk systematically rewarding programs selected for success rather than those that generated it. Funders who take evidence seriously should ask a direct question of every AI-powered evaluation tool they encounter: Does this tell me what caused the outcome, or does it only tell me what correlated with it?

From Pattern to Proof: A Continuum of Causal Certainty

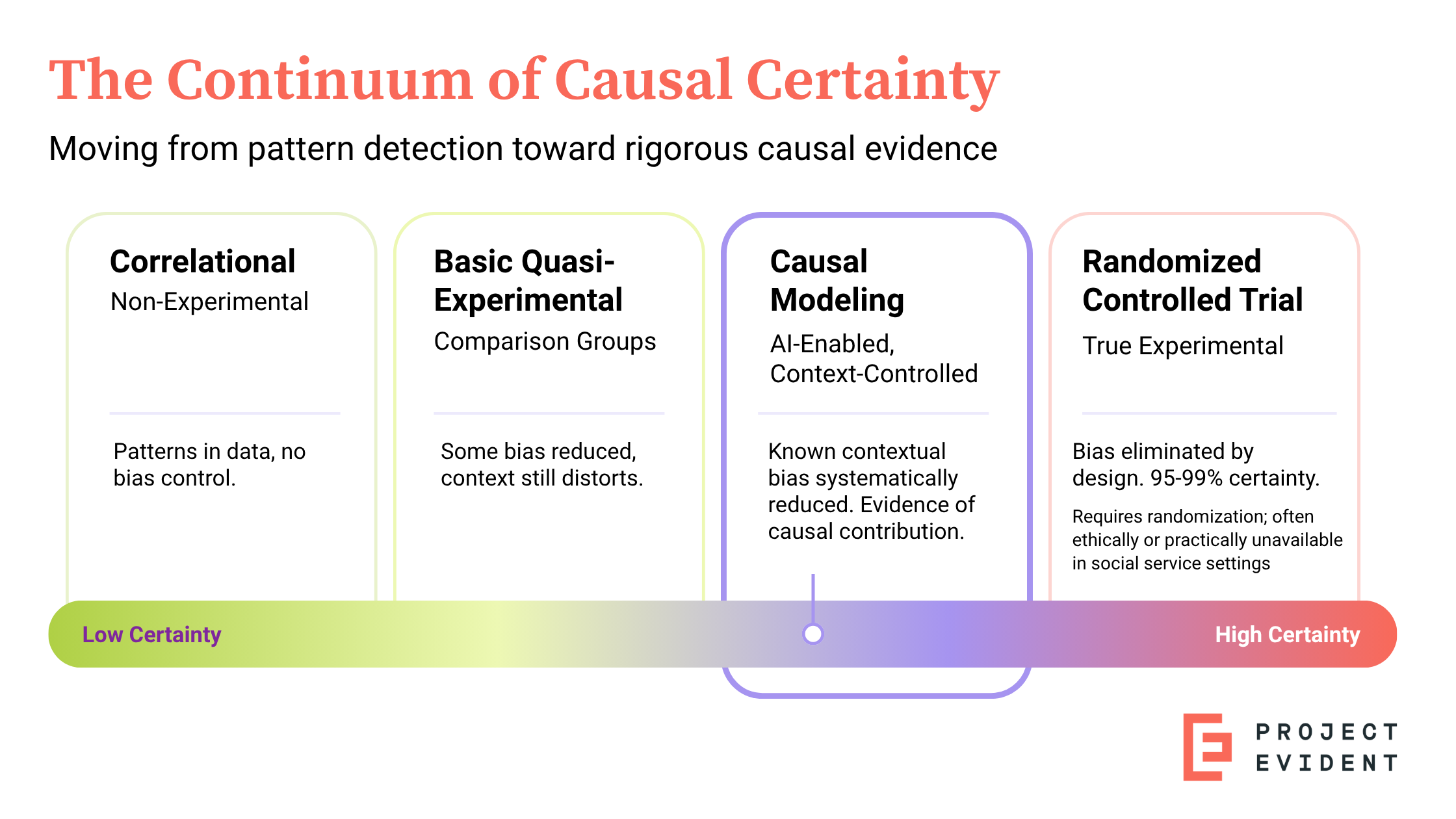

The move from AI-generated correlation toward something genuinely trustworthy requires moving further along the Continuum of Causal Certainty (see graphic below).

Step one: Correlational AI. Almost all AI stops here — at finding patterns in historical data. Because those patterns reflect existing biases in who was served and how, correlational AI cannot tell you what caused an outcome.

Step two: Basic quasi-experimental research. This constructs comparison groups to reduce bias. But because traditional quasi-experimental studies can only control for a small set of variables that the researcher deliberately built into the design, context still distorts the comparison.

Step three: AI-enabled causal modeling. This is where Project Evident operates. Because it draws on the rich administrative and operational data that programs already collect for every participant, it can incorporate far more contextual variables into the matching process than a traditionally designed study could. The result: people grouped by similar histories, challenges, and starting points, then compared based on what happened to those who experienced the program differently. Similar people, similar circumstances, different experiences. That comparison functions as a natural experiment.

What it produces is evidence of causal contribution. Not the certainty that only a randomized controlled trial can establish. However, it does provide a more rigorous form of causal evidence than real-world program data has previously supported. Credible enough to act on. Honest about what it cannot yet claim. Precise enough to point toward what the most rigorous evaluation should test next.

Why the Architecture Has to Be Different

The AI-powered analytics market in the social and education sectors is crowded and getting more so. Dozens of vendors offer AI-driven dashboards, predictive tools, and outcomes reporting. What almost none of them offer is causal modeling capability designed for and with practitioners.

The problem isn’t a lack of AI capability, but a lack of infrastructure that meets causal standards. Where AI, as typically deployed, treats all variables as equivalent inputs to a pattern-matching problem, a causal approach imposes structure: it begins with the program’s own theory of change, carefully distinguishes between context (who a person is and where they are starting from), experience (which program elements, strategies, and combinations they actually received), and outcome (what changed). It then uses AI-assisted methods to find comparison groups within the data and surface what a standard AI model cannot: evidence of causal contribution.

The output is not a correlational prediction and not controlled experimental proof. It is a rigorous, theory-grounded case that specific program elements, at specific intensities and in specific combinations, causally contributed to better outcomes for people in a specific context. Not certainty. Something more honest — and for a practitioner making decisions in real time, considerably more useful.

This is the approach that Project Evident has been building toward — a practical capability that program directors and practice leaders can use to build and run their own causal models using the data their organization already collects. The same platform then supports the design of practitioner-facing applications: dashboards, recommender tools, scenario planners, built from the causal model’s outputs and tailored to the decisions frontline staff actually make. Evidence gathering, generation, and use are connected in a single workflow, with the practitioner at the center of every step.

Project Evident’s position is clear and deliberate: causality is not a feature we add to AI. It is the foundation on which we are building an entirely different, AI-supported approach to evidence generation, built with practitioners rather than for them.

A Field-Level Imperative

We are at an inflection point.

This is exactly the moment for the sector to insist on durable infrastructure. Not just better tools, but the governance, analytic capacity, and decision-making systems that make improvement sustainable over time.

The case for causal AI is part of that larger argument. So is the case for strategic evidence planning, for practitioner-centered data systems, and for what a genuine evidence infrastructure for impact looks like at scale. These are not separate conversations; they are the same conversation, happening at the worst possible time for the sector to be distracted by shortcuts.

Before the sector locks in around correlational AI, the field has an opportunity and, we would argue, an obligation to insist on something better. To say: we will not accept AI recommendations without adherence to a set of causal standards as evidence. We will not fund correlational AI predictions as outcomes. We will demand that AI tools be capable of answering the causal questions that actually matter for improving people’s lives.

It requires funders who know what to demand from AI-powered evaluation investments. It requires practitioners who understand what AI can and cannot support. It requires researchers willing to build methods and tools that serve frontline decision-making, not just scholarly research. It requires organizations willing to hold the line on causal rigor: not because rigor is an end in itself, but because without it, the evidence AI generates cannot be trusted to be fair to the people being served, or to the truth of what is actually working.